Tim Cook, Apple's CEO sent a clear signal that the company is pushing forward with AI device development. In a major employee meeting earlier in February, he stated, “Apple is developing a new product category powered by AI,” and added, “We are very excited about this.” He also emphasized that the company is investing in new technologies because “the world is changing rapidly.”

. . . “Visual Intelligence” is positioned as Apple's new defining feature in the wearable device market after tech giant Meta has established dominance with its smart glasses, and OpenAI is also accelerating its development of wearable devices.

Looking back to 2013, years before the Apple Watch launch, Tim Cook remarked, “The sensor industry is heating up.” At that time, Apple was developing a smartwatch designed to function as a medical lab on the wrist, measuring heart rate, blood pressure, and blood sugar levels, while seriously recruiting experts from the medical sensor industry.

When the Apple Watch was launched, it was not yet a full medical device, but Apple gradually added new sensors continuously. Currently, it includes features to detect high blood pressure and alert users to sleep apnea. Furthermore, a secret silicon team is developing a non-invasive blood sugar sensor.

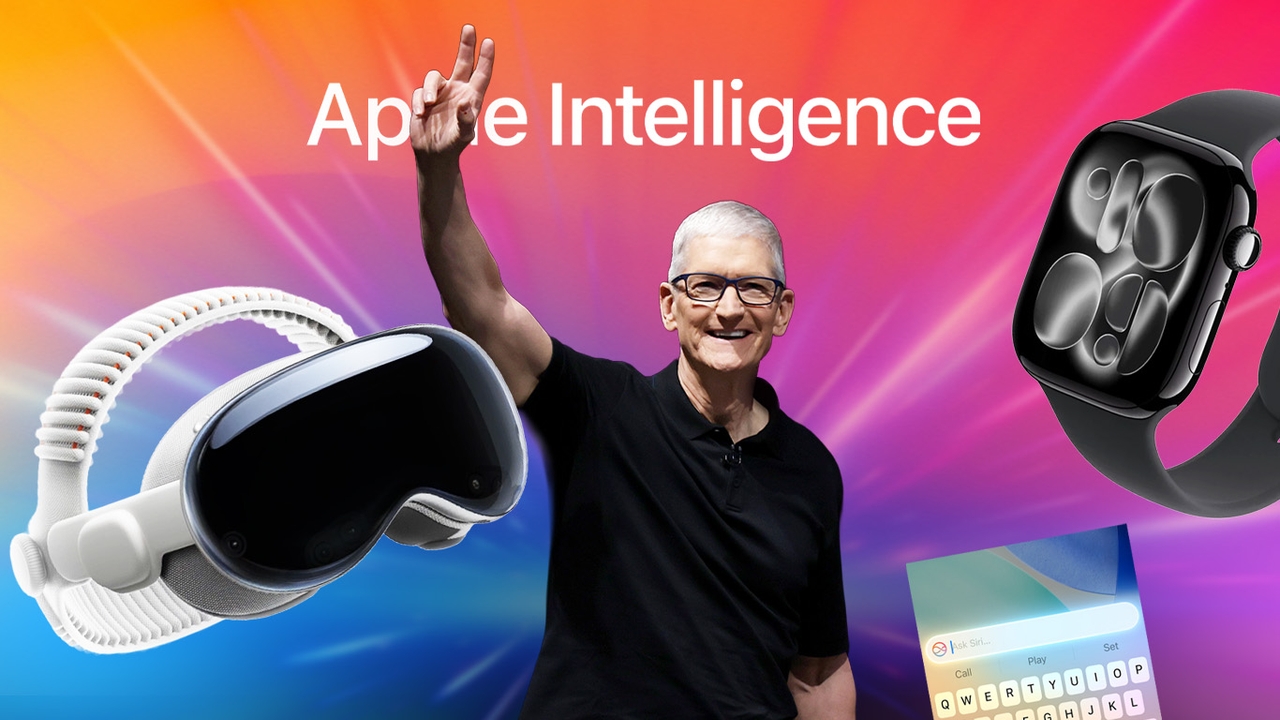

Following the Apple Watch, Apple ventured into a new market with a mixed-reality headset. Tim Cook has repeatedly hinted at the potential of AR and VR, which eventually merged into a single product, the Apple Vision Pro.

In 2016, Tim Cook said AR would be as important as eating three meals a day. Apple invested several billion U.S. dollars to develop the Vision Pro, launching it in 2023 and releasing it in 2024. Although sales have not been blockbuster, it clearly reflects Apple’s intent to dominate this market segment.

Most recently, according to Mark Gurman’s Bloomberg article, Tim Cook has begun discussing a new product category: AI wearable devices powered by a technology Apple calls “Visual Intelligence.”

Visual Intelligence debuted on the iPhone 16 Pro in 2024 under the Apple Intelligence brand before expanding to other devices. Users can take photos or screenshots and immediately ask questions about those images via ChatGPT or search images through Google.

However, Apple does not intend to rely on other companies’ technologies indefinitely. The company is developing its own models and plans to center this technology in new AI devices, including upgraded AirPods, smart glasses, and even a pendant resembling a necklace charm equipped with computer vision cameras and sensors. (Previously, Apple reportedly developed a watch with a camera for this purpose but shelved the project.)

Visual Intelligence offers basic uses such as photographing a plate of food to identify its type and ingredients. More advanced features include providing specific guidance based on what is seen—for example, instructing users to pass “the red building ahead” instead of just giving a distance in feet, or alerting them to perform certain actions when approaching designated objects or places.

During the latest quarterly earnings report, Tim Cook highlighted Visual Intelligence as one of the popular features that help users search, answer questions, and take action more quickly. In a recent employee meeting, he reiterated that Apple holds a huge advantage in the AI arena with over 2.5 billion devices worldwide, signaling a strong likelihood that Apple will bring this product to market.

When Mark Gurman asked Applehow Apple plans to compete in the AI wearable device market against existing players,

the response was that Apple’s smart glasses development team is confident their product will be superior. Despite Meta’s experience and partnerships with Ray-Ban and Oakley, the key difference lies in the ecosystem between Siri and Meta AI, and hardware approaches. Meta uses a single camera that switches modes, while Apple plans separate lenses for each function and premium materials. Both sides believe their approach is the right solution.

Follow the Facebook page: Thairath Money at this link -https://www.facebook.com/ThairathMoney