Meta has launched a new custom-designed AI chip to support the company’s plans to expand its massive Data Centers over the coming years. Within 2026-2027, the company will introduce a series of chips in the Meta Training and Inference Accelerator (MTIA) series, totaling four models intended for both fundamental social media platform tasks and Generative AI development.

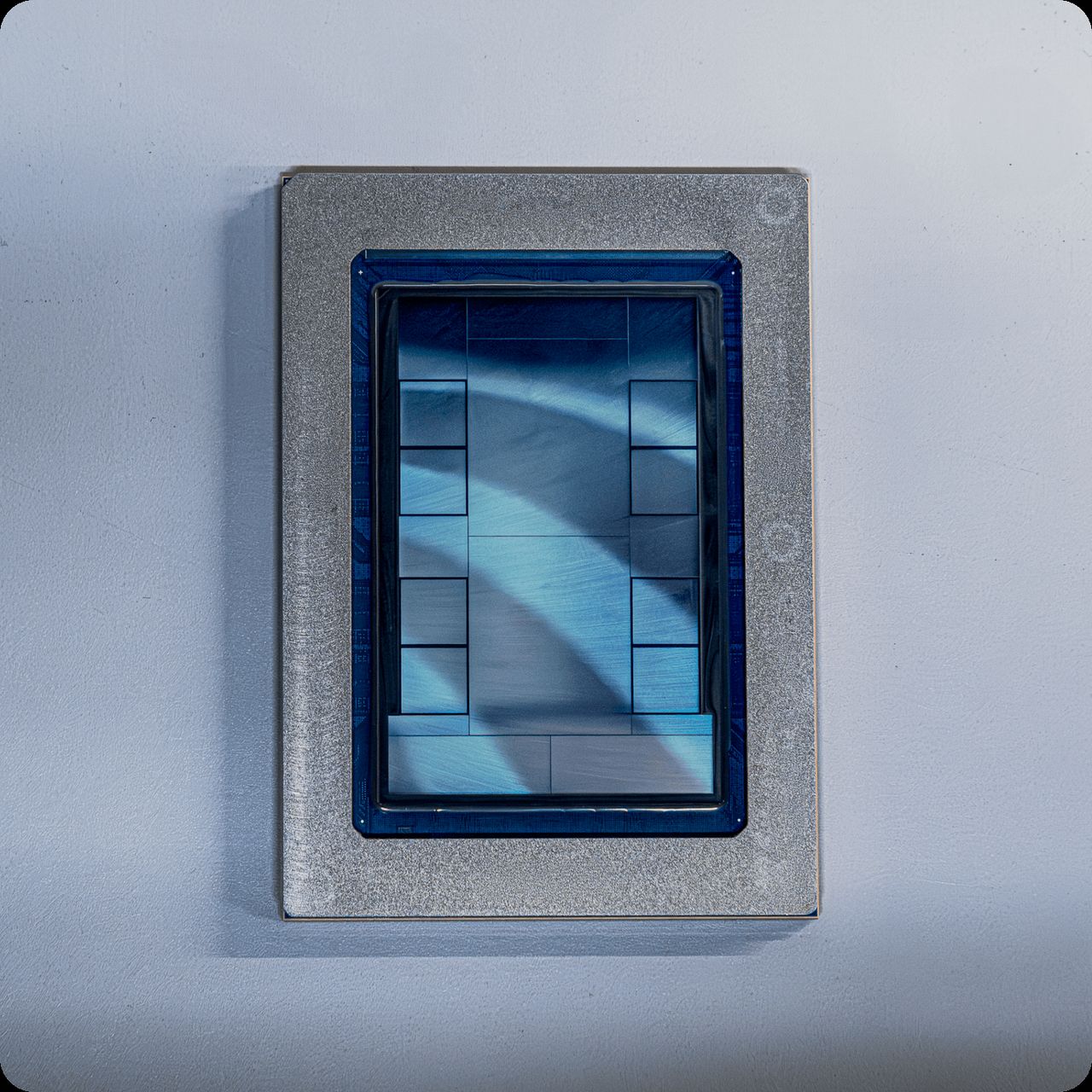

The MTIA chip line is a family of AI-focused processors designed by Meta itself. After unveiling the concept and first version of this chip series in 2023, the second version was released in 2024. Most recently, on 11 Mar 2026 GMT+7, Meta introduced the third version, MTIA 300, and plans to launch three additional models soon: MTIA 400, MTIA 450, and MTIA 500.

Yee Jiun Song, Meta’s Vice President of Engineering, said the self-designed chips, manufactured by Taiwan Semiconductor Manufacturing Company (TSMC),enable Meta to improve both performance and cost efficiency of its Data Center systems better than relying solely on other chipmakers.

He explained,“Designing our own chips also diversifies our silicon supply chain and helps mitigate the impact of fluctuating chip prices in the market to some extent, while increasing our negotiating power.”

The latest chip released is the MTIA 300, which was deployed just weeks ago. This chip is designed to train small AI models that play a key role in ranking and recommendation systems on Meta’s social media platforms like Facebook and Instagram.

Examples of tasks using these models include selecting and recommending content users should see or the most relevant ads for each user.

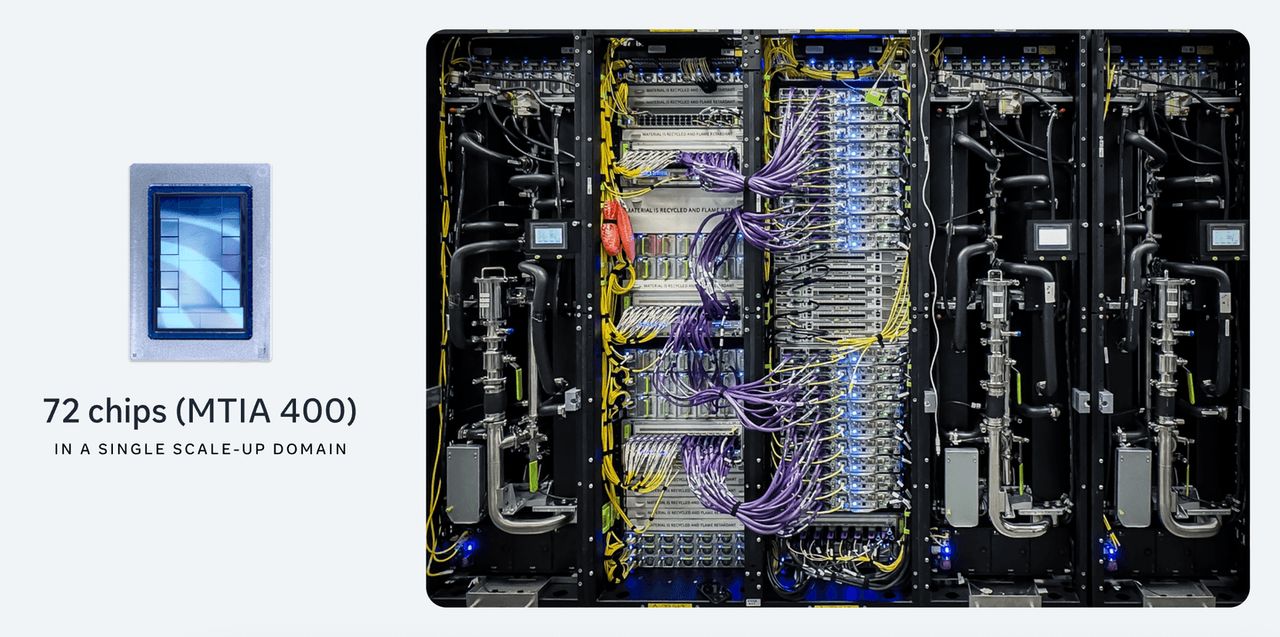

In addition to MTIA 300, Meta plans to launch three more chips: MTIA 400, , MTIA 450, and MTIA 500. These chips are specifically designed to handle Generative AI inference tasks, such as generating images from text and creating videos from prompts.

However, Yee Jiun Song confirmed that the MTIA chips will not be used to train very large models like Large Language Models.

Currently, Meta has completed testing MTIA 400 and is preparing to deploy it in Data Centers, while MTIA 450 and MTIA 500 are scheduled for use in 2027.

Yee Jiun Song also noted,that releasing new chips at this frequency is unusual in the semiconductor industry because “chip companies normally do not launch new models every six months, so this pace is very rapid.”

The main reason is that Meta is rapidly expanding its Data Center capacity and planning massive investments,which requires using the most up-to-date chips at all times, with each chip model expected to have an average lifespan of over five years.

The new MTIA chips will include increased High-Bandwidth Memory (HBM) to support Generative AI workloads. However, the accelerated AI investments across the industry have started to create shortages in memory chips.

Yee Jiun Song acknowledged Meta’s concerns about HBM supply: “But we believe we have secured sufficient supply for our planned system expansions,” he said, mentioning a strategy of diversifying the supply chain.

Although developing its own chips, Meta continues to invest in GPUs from major manufacturers. Recently, Meta made deals to deploy millions of Nvidia GPUs in its Data Centers and plans to use up to 6 gigawatts of AMD GPUs over several years.

Yee Jiun Song stated, “AI workloads evolve very rapidly, so we want to ensure we have multiple options” to support the 30 Data Centers Meta currently operates or plans to build, with 26 located in the United States.

Follow the Facebook page Thairath Money at this link -https://www.facebook.com/ThairathMoney